RipX has received a huge amount of positive press and awards since its release a year ago – however, did you know its development began way back in 2001?

2001-2003: Early Research & Development

Martin Dawe, the creator of RipX, had been working on a successful app called PhotoScore for his company Neuratron since 1996, that scans sheets of music and converts them into editable and playable music notation. After 5 years of gaining experience creating original algorithms for computer vision, he wondered whether he could apply his skills to computer audio – and invent an AI that would allow a machine to perceive audio in the same way we do.

The original concept was not specifically conceived to separate audio, but to automatically generate a musical score from microphone or CD. Even though PhotoScore had quickly become accepted as the world’s leading music scanning software, at the time Martin started Neuratron, he had had no prior experience in the field of image recognition. It was the same story with audio processing, and his first question was a big one: Whilst it is trivial to see the notes on a printed score, how is it possible to locate a note within a waveform, when it is a complex blend of sine-waves describing how notes sound. It was a task as seemingly impossible as retrieving the original ingredients from a baked cake.

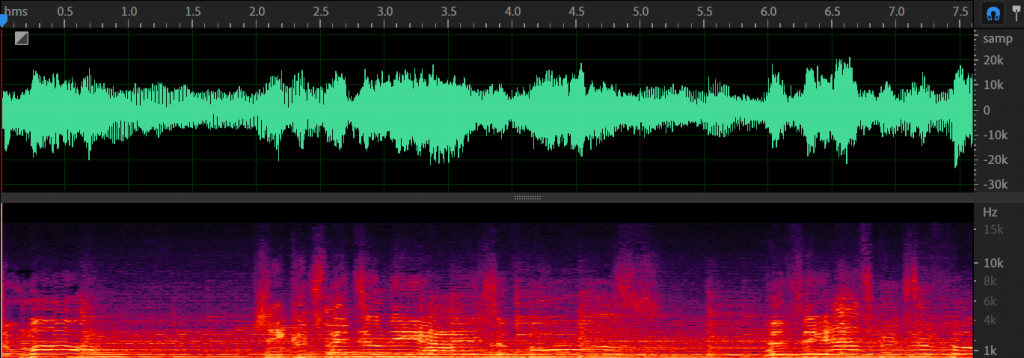

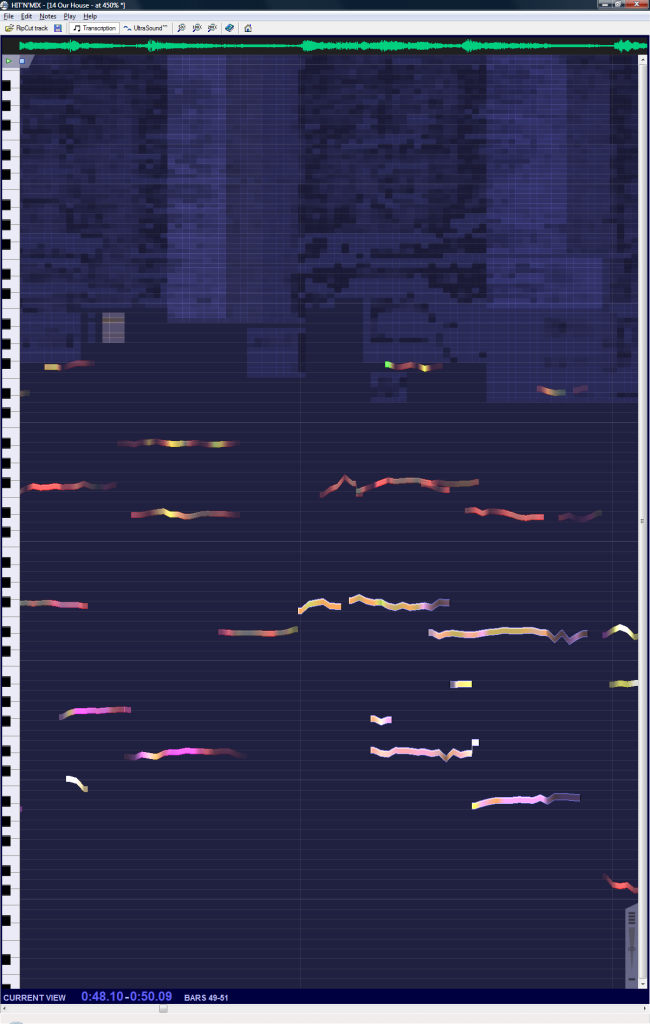

Initial research focussed on FFTs, which are still used today in DAWs to generate spectrograms. Fast Fourier Transforms split waveforms into their audio frequencies over time. These frequencies are called harmonics and make up the sound of notes, so this seemed a good place to start.

With the internet still in its infancy, Martin struggled with FFTs and so arranged a meeting with a leading London university professor, in the hope of sponsoring a research project and learning how FFTs worked. Unfortunately, the professor did not take a shine to Martin, who was barely older than his post-grads and had succeeded in fields (like music scanning) where he had not. He seemingly lost his mind and, consumed in a fit of rage, sent Martin running from the college, never to return!

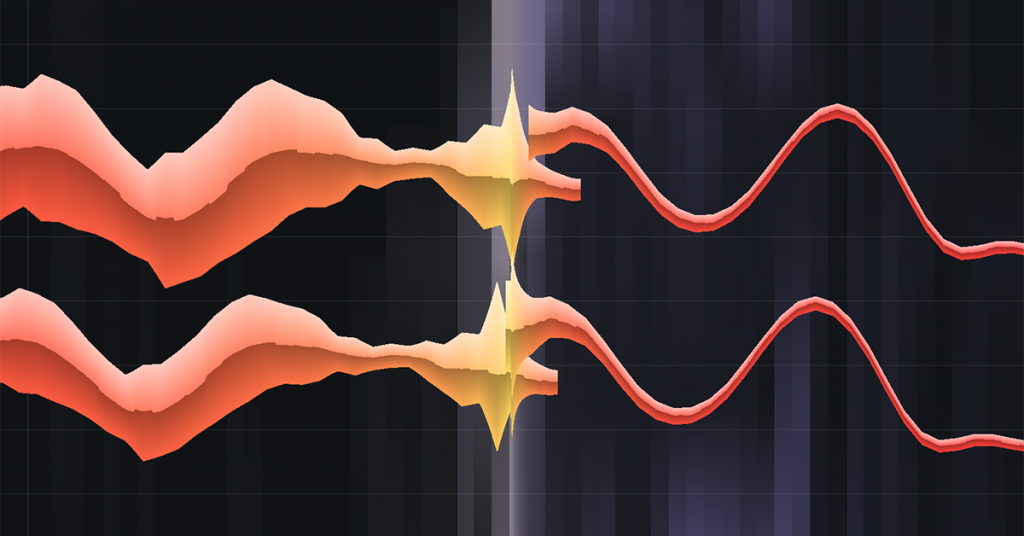

Eventually, after absorbing the knowledge of a few great books (like Digital Signal Processing by Steven W Smith), the subject matter was tamed. However, it was discovered that if you wanted to get great detail about where harmonics exist on the frequency scale, you lost detail about when they exist – and vice versa. This was quite frustrating as the sweet spot required for detecting vibrato and sudden pitch changes seemed unobtainable. The first major breakthrough (made with the assistance of Martin’s mathematician & physicist brother Dr David Dawe) was in blending the results of several FFTs (some great at pin-pointing frequencies, others working better with start and end times) into one ‘super-FFT’.

As mentioned above, notes are made up of harmonics. In a simple case, there is a fundamental harmonic which is at the same frequency as the pitch you hear. Then there are others which are multiples of the fundamental harmonic’s frequency, which give a note its sound. So, can you simply find the lowest frequencies that exist to discover the note pitches?

Sadly, that proves to be too easy: Just imagine you are listening to a drum’n’bass track on a tinny speaker. The fundamentals of the lowest notes cannot be reproduced, but the low notes still sound the same pitch. How? The brain does a lot more processing of audio than one might think, and so this deciphering of harmonic frequencies into audible notes was one of the greatest challenges for many years to come.

2004-2009: AudioTune & AudioScore

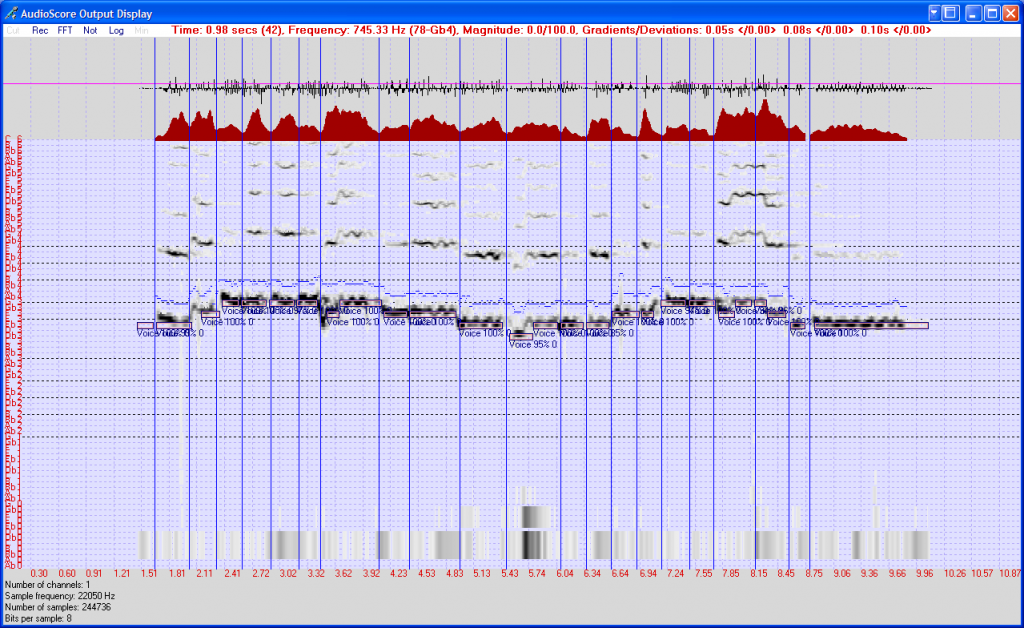

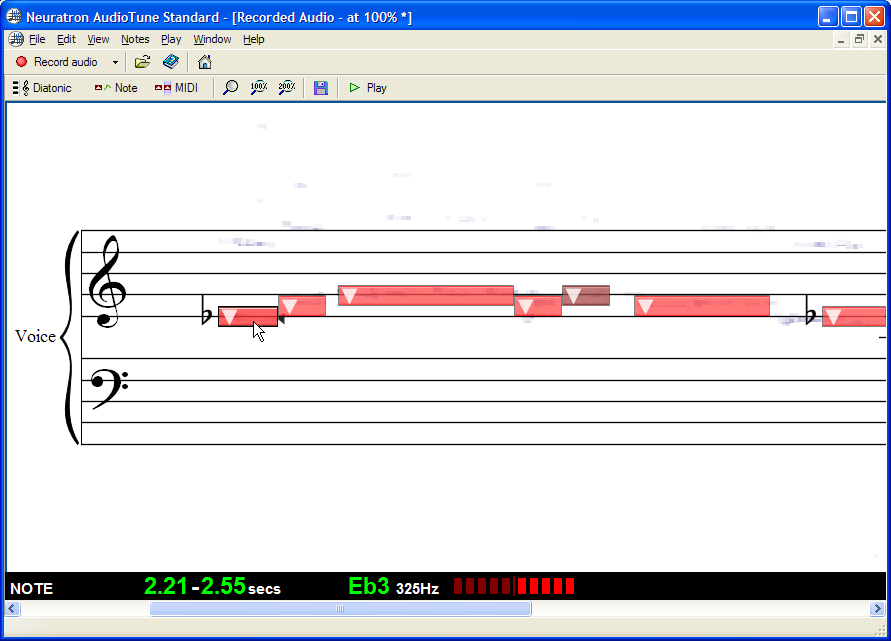

Given the complexity of harmonics in music, the first commercial goal was to create software that could pick out the notes in audio where only one was played at a time, for example to capture live singing as MIDI. After three years of experimentation, AudioTune was released in 2004. This recognized the notes in monophonic audio and exported them as MIDI files. Two years later, the first version of AudioScore was born, which added the ability to convert the audio into editable notation and even send files to score-writing programs like Sibelius.

Monophonic audio was a good starting point, but it was clear that people wanted AudioScore to recognize chords and as well as simple polyphonic parts.

Sadly, the existing super-FFT algorithm wasn’t super enough – it simply didn’t have the accuracy to separate out all the close harmonics from multiple notes played at the same time. So Martin went about developing a very high resolution system from scratch, abandoning FFTs pretty much altogether.

This was improved and chipped away at for over 10 years, and is still the basis for the latest versions of RipX, and the Rip Audio format.

Just as significantly, where there are multiple notes played at once, there are many more harmonics. To make matters even trickier, it is very common for harmonics from different notes to overlap in frequency. Working out which harmonics belong to which notes is a pretty mind-curdling problem to solve, but work began about this time, and today, the solution is quite effective.

Up until this point, the focus had been on detecting the pitches of notes, however over the next few years it became apparent that better pitch detection could be achieved by ever more detailed analysis of the audio.

2010-2018: Hit’n’Mix & Hit’n’Mix Play

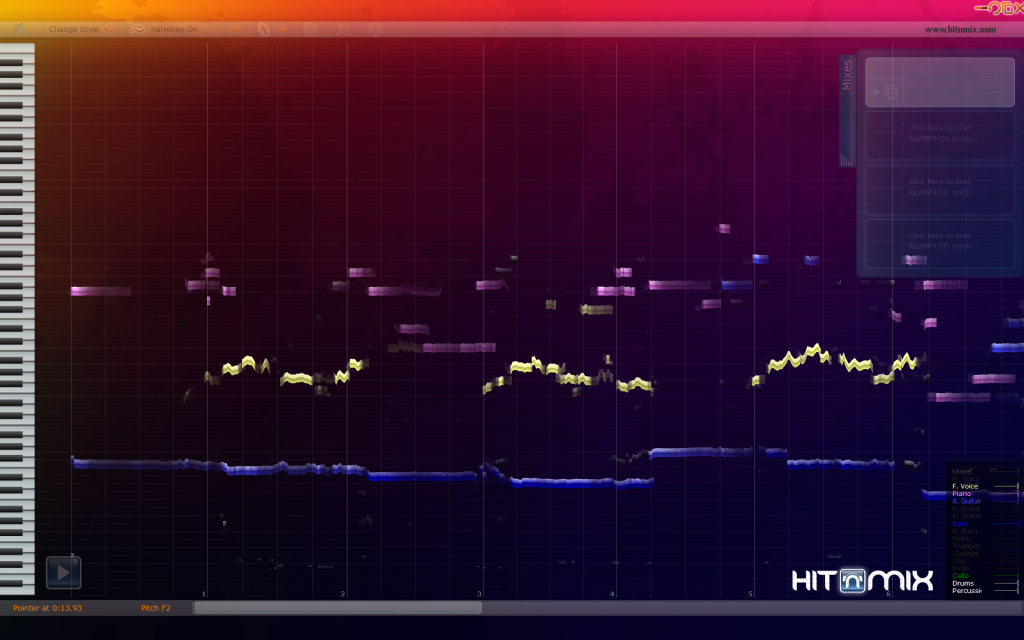

The detected detail of pitch changes, and the harmonics belonging to notes, eventually became so high that Martin began experimenting with using that information (the individual harmonic frequencies, amplitudes, & phases) to regenerate waveforms and play back notes by themselves.

This was the turning point at which individual notes could be played back separately from the rest of a track.

Of course, the results weren’t great to begin with, but it was exciting that you could hear the isolated notes at all. This spurred further development into getting out even more note detail, leading to higher quality audio reproduction.

One day Martin thought, how great it would be if you could take a track and manipulate the individual notes however you wished? And Hit’n’Mix was born.

However, as entertaining as Hit’n’Mix was to use in 2010, the audio quality wasn’t yet high enough for professional work. We marketed it as fun music software to mess around with, and the free version was called Hit’n’Mix Play. Effects and automatic changes of scale/mode were included to help people mangle their music even more!

A great memory is when BBC News came to our office to film a feature on Hit’n’Mix. We were even given permission to remix the BBC News theme tune!

In the years that followed, successive versions of Hit’n’Mix were created. Each new release included significant improvements to audio and separation quality, but Martin did not feel they were yet sufficiently good enough to market to the masses, other than with quiet updates on the website. Efforts were also slowed during this time as Martin felt he was hitting a wall with development, and so dabbled in other projects, such as handwritten music recognition using a stylus, for the new tablet devices that were starting to appear.

2019-2020: Hit’n’Mix Infinity

2019 arrived and finally it was felt that Hit’n’Mix version 4 would be good enough to release to the professional market. Scripting capabilities were added, allowing audio to be programmatically adjusted in endless ways using Python. We wrote a number of RipScripts, including one called Audioshop which enabled highly detailed editing of audio at a level not seen before. Hit’n’Mix Infinity was born and it received great press from the get go, as a very different and dynamic kind of ‘atomic’ audio editor.

2021-Today: RipX DeepRemix & DeepAudio

At the same time that Martin was working on his algorithms for separating notes, there was a rapid rise in the use of machine learning: Teaching computers how to recognize images and speech, simply by showing them many examples. Eventually this work expanded into being able to separate waveforms into stems, like vocals, drums, bass and other instruments.

Whilst the Hit’n’Mix algorithms for separating notes within chords and other polyphonic music work well, because they are based on the detection of harmonics, it is sometimes difficult to separate notes from, say vocal and piano, when they are played at the same pitch.

However, the new advances in machine learning meant higher quality audio separation of notes at the same pitch might be achievable, so we ran some experiments and saw sufficient potential to design and build a hybrid engine using the combined powers of machine learning and our existing algorithms.

Various improvements were also added to separate kick drums, other drums and percussion and RipX was the result, released on May 20 2021.

The name RipX was chosen because we could see that Rip Audio was an important new way of storing and processing audio and we needed a platform (dubbed the Future Audio Platform) to support this. Two modules for this platform DeepRemix and DeepAudio were launched to great acclaim. And further modules to take advantage of this technology are in the pipeline, so watch this space!

To The Future: The Rip Audio Format

The present-day Rip Audio format is an evolution of the way recognized notes were stored in early versions of AudioScore. It is a complete departure from waveforms, storing full stereo audio as note and harmonic objects, each containing all the panning, amplitude and pitch information required to recreate the audio as waveforms, so that it can then be played through speakers and headphones.

As well as providing direct control over notes and harmonics, and the ability to display the musical information fluidly on-screen, Rip Audio has the advantage of being able to apply highly dynamic and sophisticated edits and effects when playing back live audio, all using minimal processing power.

More background about what makes the Rip Audio format so special can be found in the article More Than A Waveform by Resolution magazine.

Download Free RipX Trial Learn About RipX DeepRemix Learn About RipX DeepAudio

Amazing!? Don’t you have a linux version (debian/ubuntu) of your software? Your software is so amazing that I might want to consider OS after 15 years just to try it out 😀

What a truly inspiring story of perseverance! Thank goodness for the minds of people like Martin Dawe who keep pushing on despite the frustrations of technical problems that seem insurmountable. As a guitar player, I have to say RipX is a total game changer.

The biggest shift came when systems stopped trying to manually model sound and instead learned it from data. Thanks for sharing this amazing information and geometry dash 3d.

Although winning at foodle takes mental acuity, the game is a wonderful stress relief all the same. Through straightforward gaming, this game aids in the expansion of one’s knowledge of both cuisine and vocabulary. Remember to publish your result on Twitter after you finish the challenge. There is a huge community of players that share your interests, so it’s easy to meet new acquaintances.